Difference between revisions of "AI benchmarks"

KevinYager (talk | contribs) (→Methods) |

KevinYager (talk | contribs) (→Task Length) |

||

| Line 6: | Line 6: | ||

==Task Length== | ==Task Length== | ||

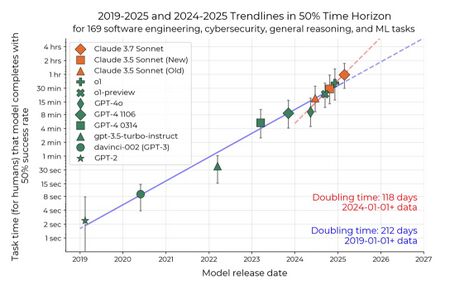

| − | * 2025-03: [Measuring AI Ability to Complete Long Tasks Measuring AI Ability to Complete Long Tasks] | + | * 2025-03: [https://arxiv.org/abs/2503.14499 Measuring AI Ability to Complete Long Tasks Measuring AI Ability to Complete Long Tasks] |

[[Image:GmZHL8xWQAAtFlF.jpeg|450px]] | [[Image:GmZHL8xWQAAtFlF.jpeg|450px]] | ||

Revision as of 18:02, 19 March 2025

Contents

Methods

- AidanBench: Evaluating Novel Idea Generation on Open-Ended Questions (code)

- ZebraLogic: On the Scaling Limits of LLMs for Logical Reasoning. Assess reasoning using puzzles of tunable complexity.

Task Length

Assess Specific Attributes

Various

- LMSYS: Human preference ranking leaderboard

- Tracking AI: "IQ" leaderboard

- Vectara Hallucination Leaderboard

- LiveBench: A Challenging, Contamination-Free LLM Benchmark

Software/Coding

Creativity

Reasoning

- ENIGMAEVAL: "reasoning" leaderboard (paper)

Assistant/Agentic

- GAIA: a benchmark for General AI Assistants

- Galileo AI Agent Leaderboard

- Smolagents LLM Leaderboard: LLMs powering agents

Science

See: Science Benchmarks