Difference between revisions of "AI benchmarks"

KevinYager (talk | contribs) (→Reasoning) |

KevinYager (talk | contribs) (→Assess Specific Attributes) |

||

| Line 33: | Line 33: | ||

==Visual== | ==Visual== | ||

* 2025-03: [https://arxiv.org/abs/2503.14607 Can Large Vision Language Models Read Maps Like a Human?] MapBench | * 2025-03: [https://arxiv.org/abs/2503.14607 Can Large Vision Language Models Read Maps Like a Human?] MapBench | ||

| + | |||

| + | ==Conversation== | ||

| + | * 2025-01: [https://arxiv.org/abs/2501.17399 MultiChallenge: A Realistic Multi-Turn Conversation Evaluation Benchmark Challenging to Frontier LLMs] ([https://scale.com/research/multichallenge project], [https://github.com/ekwinox117/multi-challenge code]) | ||

==Creativity== | ==Creativity== | ||

Revision as of 16:26, 14 April 2025

Contents

General

- Models Table (lifearchitect.ai)

- Artificial Analysis

- Epoch AI

Methods

- AidanBench: Evaluating Novel Idea Generation on Open-Ended Questions (code)

- Leaderboard

- Suggestion to use Borda count

- 2025-04: add Quasar Alpha, Optimus Alpha, Llama-4 Scout and Llama-4 Maverick

- ZebraLogic: On the Scaling Limits of LLMs for Logical Reasoning. Assess reasoning using puzzles of tunable complexity.

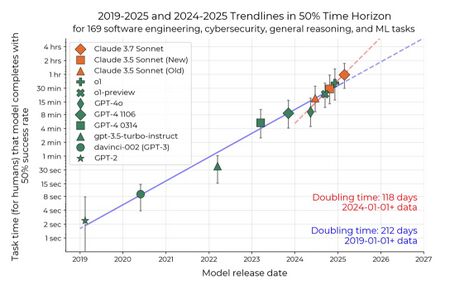

Task Length

- 2020-09: Ajeya Cotra: Draft report on AI timelines

- 2025-03: Measuring AI Ability to Complete Long Tasks Measuring AI Ability to Complete Long Tasks

Assess Specific Attributes

Various

- LMSYS: Human preference ranking leaderboard

- Tracking AI: "IQ" leaderboard

- LiveBench: A Challenging, Contamination-Free LLM Benchmark

- LLM Thematic Generalization Benchmark

Hallucination

Software/Coding

Visual

- 2025-03: Can Large Vision Language Models Read Maps Like a Human? MapBench

Conversation

- 2025-01: MultiChallenge: A Realistic Multi-Turn Conversation Evaluation Benchmark Challenging to Frontier LLMs (project, code)

Creativity

- 2024-10: AI as Humanity's Salieri: Quantifying Linguistic Creativity of Language Models via Systematic Attribution of Machine Text against Web Text

- 2024-11: AidanBench: Evaluating Novel Idea Generation on Open-Ended Questions (code)

- 2024-12: LiveIdeaBench: Evaluating LLMs' Scientific Creativity and Idea Generation with Minimal Context

- LLM Creative Story-Writing Benchmark

Reasoning

- ENIGMAEVAL: "reasoning" leaderboard (paper)

- Sober Reasoning Leaderboard

Assistant/Agentic

- GAIA: a benchmark for General AI Assistants

- Galileo AI Agent Leaderboard

- Smolagents LLM Leaderboard: LLMs powering agents

- OpenAI PaperBench: Evaluating AI’s Ability to Replicate AI Research (paper, code)

Science

See: Science Benchmarks