Difference between revisions of "AI predictions"

KevinYager (talk | contribs) (→Surveys of Opinions/Predictions) |

KevinYager (talk | contribs) (→Alignment) |

||

| Line 103: | Line 103: | ||

*# [https://joecarlsmith.substack.com/p/paths-and-waystations-in-ai-safety Paths and waystations in AI safety] ([https://joecarlsmithaudio.buzzsprout.com/2034731/episodes/16768804-paths-and-waystations-in-ai-safety audio version]) | *# [https://joecarlsmith.substack.com/p/paths-and-waystations-in-ai-safety Paths and waystations in AI safety] ([https://joecarlsmithaudio.buzzsprout.com/2034731/episodes/16768804-paths-and-waystations-in-ai-safety audio version]) | ||

*# [https://joecarlsmith.substack.com/p/ai-for-ai-safety AI for AI safety] ([https://joecarlsmithaudio.buzzsprout.com/2034731/episodes/16790183-ai-for-ai-safety audio version]) | *# [https://joecarlsmith.substack.com/p/ai-for-ai-safety AI for AI safety] ([https://joecarlsmithaudio.buzzsprout.com/2034731/episodes/16790183-ai-for-ai-safety audio version]) | ||

| + | |||

| + | ==Strategic/Technical== | ||

| + | * 2025-03: [https://resilience.baulab.info/docs/AI_Action_Plan_RFI.pdf AI Dominance Requires Interpretability and Standards for Transparency and Security] | ||

==Strategic/Policy== | ==Strategic/Policy== | ||

Revision as of 18:14, 18 March 2025

Contents

AGI Achievable

- Yoshua Bengio: Managing extreme AI risks amid rapid progress

- Leopold Aschenbrenner: Situational Awareness: Counting the OOMs

- Richard Ngo: Visualizing the deep learning revolution

- Katja Grace: Survey of 2,778 AI authors: six parts in pictures

- Epoch AI: Machine Learning Trends

- AI Digest: How fast is AI improving?

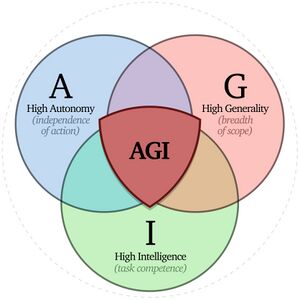

AGI Definition

- 2023-11: Allan Dafoe, Shane Legg, et al.: Levels of AGI for Operationalizing Progress on the Path to AGI

- 2024-04: Bowen Xu: What is Meant by AGI? On the Definition of Artificial General Intelligence

Economic and Political

- 2023-12: My techno-optimism: "defensive acceleration" (Vitalik Buterin)

- 2024-03: Noah Smith: Plentiful, high-paying jobs in the age of AI: Comparative advantage is very subtle, but incredibly powerful. (video)

- 2024-03: Scenarios for the Transition to AGI (AGI leads to wage collapse)

- 2024-06: Situational Awareness (Leopold Aschenbrenner) - select quotes, podcast, text summary of podcast

- 2024-06: AI and Growth: Where Do We Stand?

- 2024-09: OpenAI Infrastructure is Destiny: Economic Returns on US Investment in Democratic AI

- 2024-12: By default, capital will matter more than ever after AGI (L Rudolf L)

- 2025-01: The Intelligence Curse: With AGI, powerful actors will lose their incentives to invest in people

- 2025-01: Microsoft: The Golden Opportunity for American AI

- 2025-01: AGI Will Not Make Labor Worthless

- 2025-01: AI in America: OpenAI's Economic Blueprint (blog)

- 2025-01: How much economic growth from AI should we expect, how soon?

- 2025-02: Morgan Stanley: The Humanoid 100: Mapping the Humanoid Robot Value Chain

- 2025-02: The Anthropic Economic Index: Which Economic Tasks are Performed with AI? Evidence from Millions of Claude Conversations

- 2025-02: Strategic Wealth Accumulation Under Transformative AI Expectations

- 2025-02: Tyler Cowen: Why I think AI take-off is relatively slow

Job Loss

- 2023-03: GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models

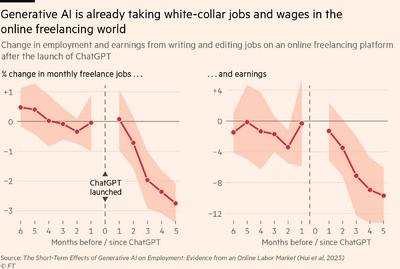

- 2023-08: The Short-Term Effects of Generative Artificial Intelligence on Employment: Evidence from an Online Labor Market

- 2023-09: What drives UK firms to adopt AI and robotics, and what are the consequences for jobs?

- 2023-11: New Analysis Shows Over 20% of US Jobs Significantly Exposed to AI Automation In the Near Future

- 2024-01: Duolingo cuts 10% of its contractor workforce as the company embraces AI

- 2024-02: Gen AI is a tool for growth, not just efficiency: Tech CEOs are investing to build their workforce and capitalise on new opportunities from generative AI. That’s a sharp contrast to how their peers view it.

- 2024-04: AI is Poised to Replace the Entry-Level Grunt Work of a Wall Street Career

- 2024-07: AI Is Already Taking Jobs in the Video Game Industry: A WIRED investigation finds that major players like Activision Blizzard, which recently laid off scores of workers, are using generative AI for game development

- 2024-08: Klarna: AI lets us cut thousands of jobs - but pay more

- 2025-01: AI and Freelancers: Has the Inflection Point Arrived?

- 2025-01: Yes, you're going to be replaced: So much cope about AI

Overall

- 2025-03: Kevin Roose (New York Times): Powerful A.I. Is Coming. We’re Not Ready. Three arguments for taking progress toward artificial general intelligence, or A.G.I., more seriously — whether you’re an optimist or a pessimist.

- 2025-03: Nicholas Carlini: My Thoughts on the Future of "AI": "I have very wide error bars on the potential future of large language models, and I think you should too."

Surveys of Opinions/Predictions

- 2025-02: Why do Experts Disagree on Existential Risk and P(doom)? A Survey of AI Experts

- 2025-02: Nicholas Carlini: AI forecasting retrospective: you're (probably) over-confident

Bad Outcomes

- 2019-03: What failure looks like

- 2025-01: Gradual Disempowerment: Systemic Existential Risks from Incremental AI Development (web version)

Intelligence Explosion

Psychology

Science & Technology Improvements

- 2024-09: Sam Altman: The Intelligence Age

- 2024-10: Dario Amodei: Machines of Loving Grace

- 2024-11: Google DeepMind: A new golden age of discovery

- 2025-03: Fin Moorhouse, Will MacAskill: Preparing for the Intelligence Explosion

Plans

- A Narrow Path: How to Secure our Future

- Marius Hobbhahn: What’s the short timeline plan?

- Building CERN for AI: An institutional blueprint

- AGI, Governments, and Free Societies

Philosophy

- Dan Faggella:

- Joe Carlsmith: 2024: Otherness and control in the age of AGI

- Anthony Aguirre:

Alignment

- What are human values, and how do we align AI to them? (blog)

- Joe Carlsmith: 2025: How do we solve the alignment problem? Introduction to an essay series on paths to safe, useful superintelligence

- What is it to solve the alignment problem? Also: to avoid it? Handle it? Solve it forever? Solve it completely? (audio version)

- When should we worry about AI power-seeking? (audio version)

- Paths and waystations in AI safety (audio version)

- AI for AI safety (audio version)

Strategic/Technical

Strategic/Policy

- Amanda Askell, Miles Brundage, Gillian Hadfield: The Role of Cooperation in Responsible AI Development

- Dan Hendrycks, Eric Schmidt, Alexandr Wang: Superintelligence Strategy

- Anthony Aguirre: Keep The Future Human (essay)

- The 4 Rules That Could Stop AI Before It’s Too Late (video) (2025)

- Oversight: Registration required for training >1025 FLOP and inference >1019 FLOP/s (~1,000 B200 GPUs @ $25M). Build cryptographic licensing into hardware.

- Computation Limits: Ban on training models >1027 FLOP or inference >1020 FLOP/s.

- Strict Liability: Hold AI companies responsible for outcomes.

- Tiered Regulation: Low regulation on tool-AI, strictest regulation on AGI (general, capable, autonomous systems).

- The 4 Rules That Could Stop AI Before It’s Too Late (video) (2025)