GISAXS measurement time

The time required for a GISAXS measurement of course varies based on numerous factors. The most important factors are the flux of the x-ray source, and the inherent scattering power of the sample in question.

Generally, a single exposure (2D image) takes on the order of 1 s to 60 s at a synchrotron beamline. The same measurement on a labscale instrument will of course take considerably longer; typically 10 minutes to a couple hours for a single exposure.

A full measurement on a single sample typically involves aligning the sample (which will take 2-5 minutes), and then exposures at a variety of incident angles. It is usually a good idea to collect data below the critical angle, near the critical angle, and above the critical angle. This multi-angle data makes eventual data interpretation easier: the sub-critical-angle data provides a measure of the structure in the near-surface region. The data above the critical angle is complementary in that it probes the entire depth of the film. Measurements near the critical angle exhibit strong intensity enhancements, which is useful for weak signals; measurements well above the critical angle yield lower scattering intensity, but the data is less complicated by refraction distortion and dynamic scattering (see also GTSAXS).

In total, sample alignment and measurement at 3-8 different incident angles will thus consume approximately 2-10 minutes on a synchrotron instrument. Full characterization of a sample may also involve collecting a reflectivity curve, which will of course increase the measurement time.

Contents

Factors affecting exposure time

The amount of time required for a single 2D exposure depends on all the same factors that affect the overall scattering intensity. Of course, it is also affected by the desired signal-to-noise ratio (see below).

- Beam flux: Higher flux will decrease the required exposure time. The effect is linear: so doubling the beam flux will half the measurement time. Undulator beamlines at modern synchrotrons have exceedingly high flux: the required measurement time may only be milliseconds.

- Sample volume: Larger samples of course scatter more. Note that in transmission-mode, if the sample is too thick, it will instead attenuate the scattering (due to absorption or multiple scattering). In grazing-incidence experiments, larger sample sizes increase scattering power and thus decrease measurement time. However, this is only useful up to a point: if the sample is large enough to fully-capture the incident beam (i.e. there is no spill-over from the beam projection), then increasing sample dimensions further will not change anything.

- Ordering: More highly ordered systems scatter more strongly.

- Scattering contrast: The larger the electron-density difference between the structured phases, the stronger the signal.

Signal-to-noise ratio

The signal-to-noise ratio (SNR) is a measure of data quality, wherein one compares the strength of the signal of interest to the complicating background noise. In x-ray scattering measurements, various kinds of background (detector background, substrate scattering, instrument windows, air scattering, etc.) worsen the SNR. However, the largest source of noise is frequently simply shot noise: the inherent counting statistics arising from the small number of photons being detected. For integer counting, the SNR goes as:

Where is the (average) signal, and is the standard deviation of the signal (i.e. the noise), and N is the integer number of counts. Thus, for a pixel that has 10,000 counts, the SNR is 100.

The above equation makes it clear that improving the SNR is in general difficult: to improve the data quality by a factor of 10, one must increase the measurement time by a factor of 102 = 100. In other words, slightly increasing measurements times does not appreciably improve data quality (one must instead increase measurement times by a meaningful factor).

Overcounting

In general, longer exposure times yield better-quality data (higher SNR). However, one must be careful to avoid overcounting.

Detector saturation

X-ray detectors can be saturated if the signal is too large. Every detector technology has a limit, with respect to the maximum instantaneous counting rate (which can only be mitigated by attenuating the beam), the maximum pixel count value, and (possibly) a maximum global (whole image) count rate/value.

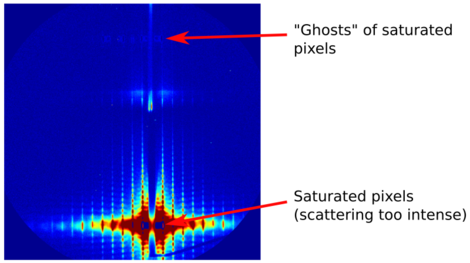

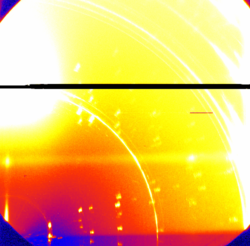

Fiber-coupled CCD detectors typically have a per-pixel dynamic range of, e.g., 16-bit = 65,536 counts. If a given pixel has more counts than this, the data file will store an erroneous value. The value may be pegged at the max-count, or may instead have a nonsensical value like -1, or the most-significant-bits may be lost, in which case the pixel will have an intermediate (possibly random-seeming) value. This saturation makes data within the saturated pixels useless. Worse still, CCD readout technology introduces cross-talk between pixels (and even between readout modules: i.e. between different regions of the detector image). The end result is that a saturated image may have significant artifacts: the pixels near the saturation region will have erroneous values, entire lines of pixels may become saturated, or 'ghosts' of the saturated data may appear throughout the image.

Some detectors exhibit non-linear response even before saturation occurs. This non-linearity of course makes quantitative interpretation of data impossible. For any given detector, one must take care to avoid collecting data in the saturated or near-saturated (non-linear) regimes. (On the other hand, if all one wants is to get a qualitative sense of the sample structure, these artifacts may not matter, as long as one is aware of them.)

Modern hybrid pixel detectors (e.g. the Dectris Pilatus or Eiger technologies) tend to have much larger dynamic range: e.g. they are 20-bit, meaning one only saturates at ~1 million counts within a pixel. This higher dynamic-range is much more forgiving (and these detectors also tend not to exhibit cross-talk between pixels); nevertheless one must again be careful to avoid saturation.

Sample damage

Another concern with respect to counting time is radiation damage. Synchrotron x-ray beams are extremely bright, and can easily destroy the structure one is attempting to measure. The effect is most pronounced for soft materials (polymers, etc.); and is even more rapid in the presence of oxygen, humidity, or solvent vapours. Performing measurements with the sample in vacuum can help forestall (but not eliminate) sample damage.

One should always be on the lookout for signs of sample damage in the GISAXS data itself. If the same spot is measured repeatedly, and the scattering pattern appears to be changing (in particular becoming broader and 'uglier'), then sample damage may be occurring.

Mitigation

The simplest way to mitigate the above effects is to keep the exposure time as short as possible (while still obtaining the data one needs). With respect to measurement time, one can also collect the data in multiple frames. It is quite easy after-the-fact to sum the frames together in order to recover a long-exposure (good SNR) image. On the other hand, one can inspect the images and discard images after sample damage occurs. This multi-exposure is also a simple way to avoid detector saturation: each individual image will not be saturated, and the images can be summed together into a higher bit-depth data file.

Another simple trick to avoid sample damage is to periodically shift the x-ray beam along the (presumably homogeneous) sample. Since the x-ray beam is typically only ~100 microns wide, and most samples are ~5 mm wide, there is ample room to conduct subsequent measurements on 'fresh' spots. Note that substantial sample translation may require realignment of the sample.